A recent statement by the Global Commission on the Stability of Cyberspace (GCSC) has highlighted the growing acceptance and adoption of the concept of a "Public Core" of the Internet, and a norm (adopted by governments and intergovernmental organisations) on non-interference with this Core.

But what exactly is the "Public Core" of the Internet?

The term "Public Core" was defined by GCSC, and we'll take a look at the GCSC's own definition later in this post, but first, it's worth looking back at the Internet's history.

In the beginning

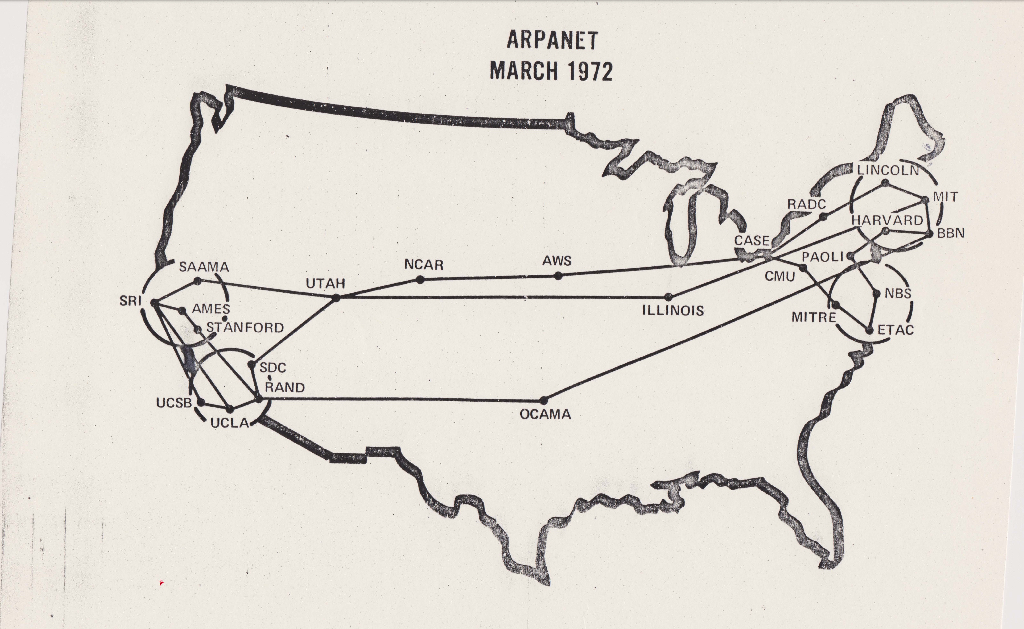

In the beginning, where wizards stayed up late, was the ARPANET. It's now an urban myth that the Internet was designed to provide a communications network that could withstand a nuclear First Strike, but that's not actually true (if it were, then some of the subsequent security issues that have plagued the modern Internet might never have come to pass!). The real motive for creating it was to allow more efficient use of extremely scarce (and expensive) computing resources on (then newly-introduced) time-sharing computer systems. These systems allowed multiple users to run programs simultaneously (compared to the previous generation which could only run a single program at a time). Despite this capability, these computers still sat idle most of the time, and the hope was that by interconnecting them, users who had terminal access at one site (whether academic or military) could make use of idle CPU time at another.

In the beginning, where wizards stayed up late, was the ARPANET. It's now an urban myth that the Internet was designed to provide a communications network that could withstand a nuclear First Strike, but that's not actually true (if it were, then some of the subsequent security issues that have plagued the modern Internet might never have come to pass!). The real motive for creating it was to allow more efficient use of extremely scarce (and expensive) computing resources on (then newly-introduced) time-sharing computer systems. These systems allowed multiple users to run programs simultaneously (compared to the previous generation which could only run a single program at a time). Despite this capability, these computers still sat idle most of the time, and the hope was that by interconnecting them, users who had terminal access at one site (whether academic or military) could make use of idle CPU time at another.

The resilience of the Internet was really a byproduct: having a "single point of failure" would hurt the reliability of the system, and reduce its cost-effectiveness. So it was engineered to be resilient and route around faulty or offline nodes.

NSFNET and the "backbone"

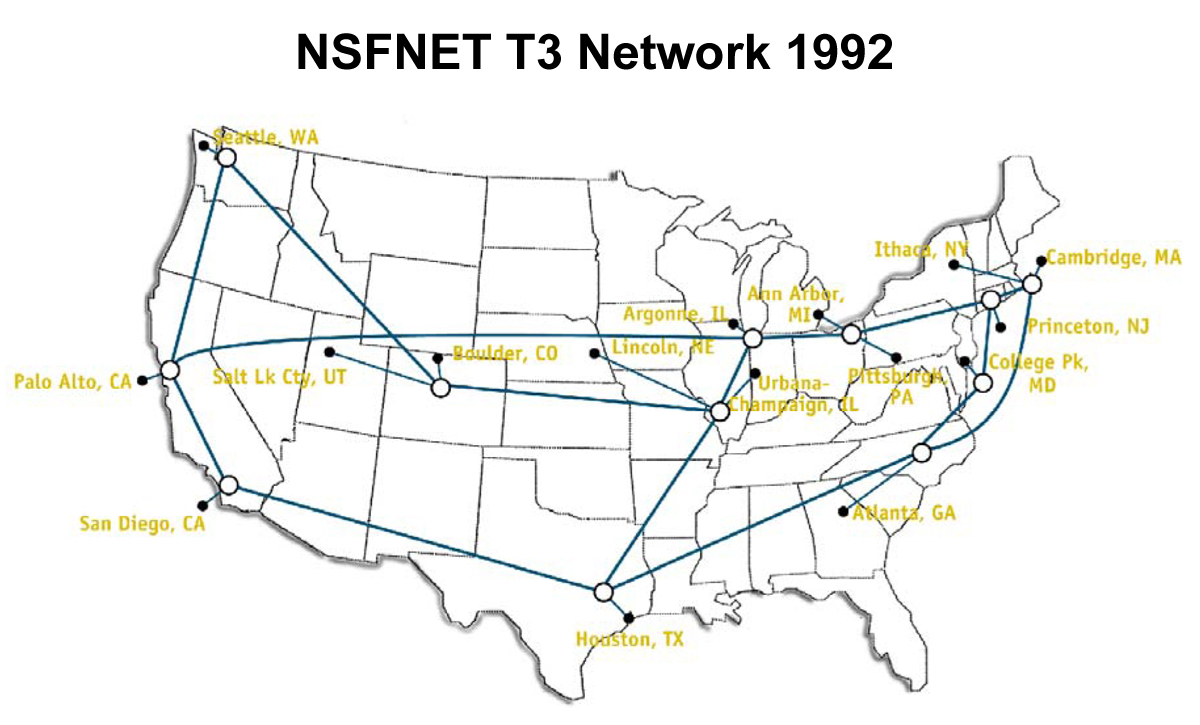

In time, the ARPANET was connected to, and ultimately absorbed by, the NSFNET (the immediate predecessor of the modern Internet). Operated by the National Science Foundation, the NSFNET initially consisted of six networking sites, using 56kbit/s links, which peered with the “legacy” ARPANET.

In time, the ARPANET was connected to, and ultimately absorbed by, the NSFNET (the immediate predecessor of the modern Internet). Operated by the National Science Foundation, the NSFNET initially consisted of six networking sites, using 56kbit/s links, which peered with the “legacy” ARPANET.

This network, which by 1987 had been upgraded to 1.5Mbps T1 links, could be considered the "backbone" of the Internet, since it handled almost all packet-switched network traffic between sites on the NSFNET.

Explosion

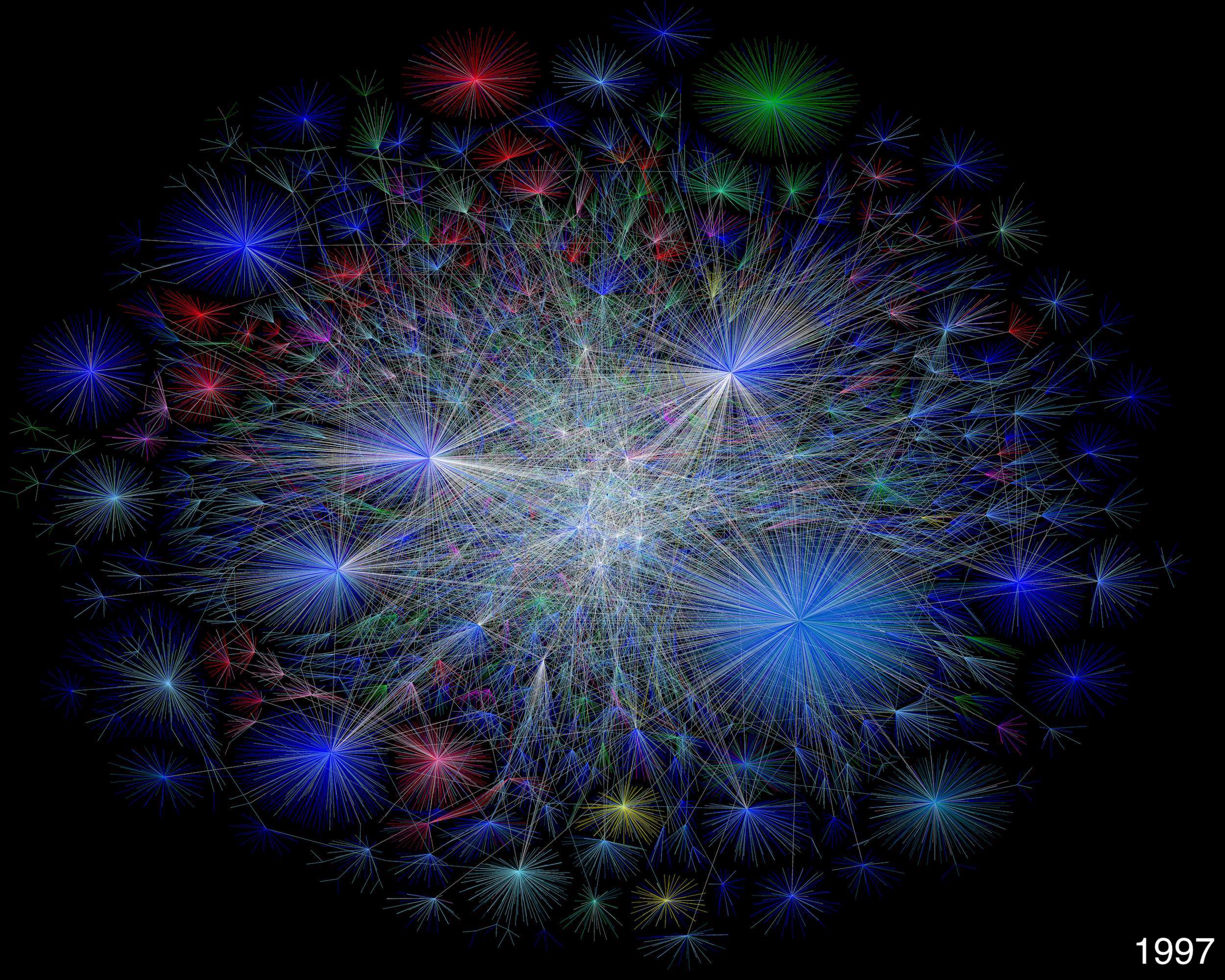

The modern Internet was born in 1991, when the NSF began to allow private companies to interconnect with the NSFNET backbone. Soon thereafter, the amount of data being handled by the NSFNET was quickly outstripped by that being carried over the new private networks, and NSFNET would ultimately be decommissioned in 1995. This coincided with changes to the governance of network resources such as IP addresses and domain names, with Network Solutions being assigned management of this function in 1993. IP address management (outside of the RIPE and APNIC regions) was subsequently assigned to ARIN, and management of the DNS root zone to ICANN.

The modern Internet was born in 1991, when the NSF began to allow private companies to interconnect with the NSFNET backbone. Soon thereafter, the amount of data being handled by the NSFNET was quickly outstripped by that being carried over the new private networks, and NSFNET would ultimately be decommissioned in 1995. This coincided with changes to the governance of network resources such as IP addresses and domain names, with Network Solutions being assigned management of this function in 1993. IP address management (outside of the RIPE and APNIC regions) was subsequently assigned to ARIN, and management of the DNS root zone to ICANN.

The Public Core

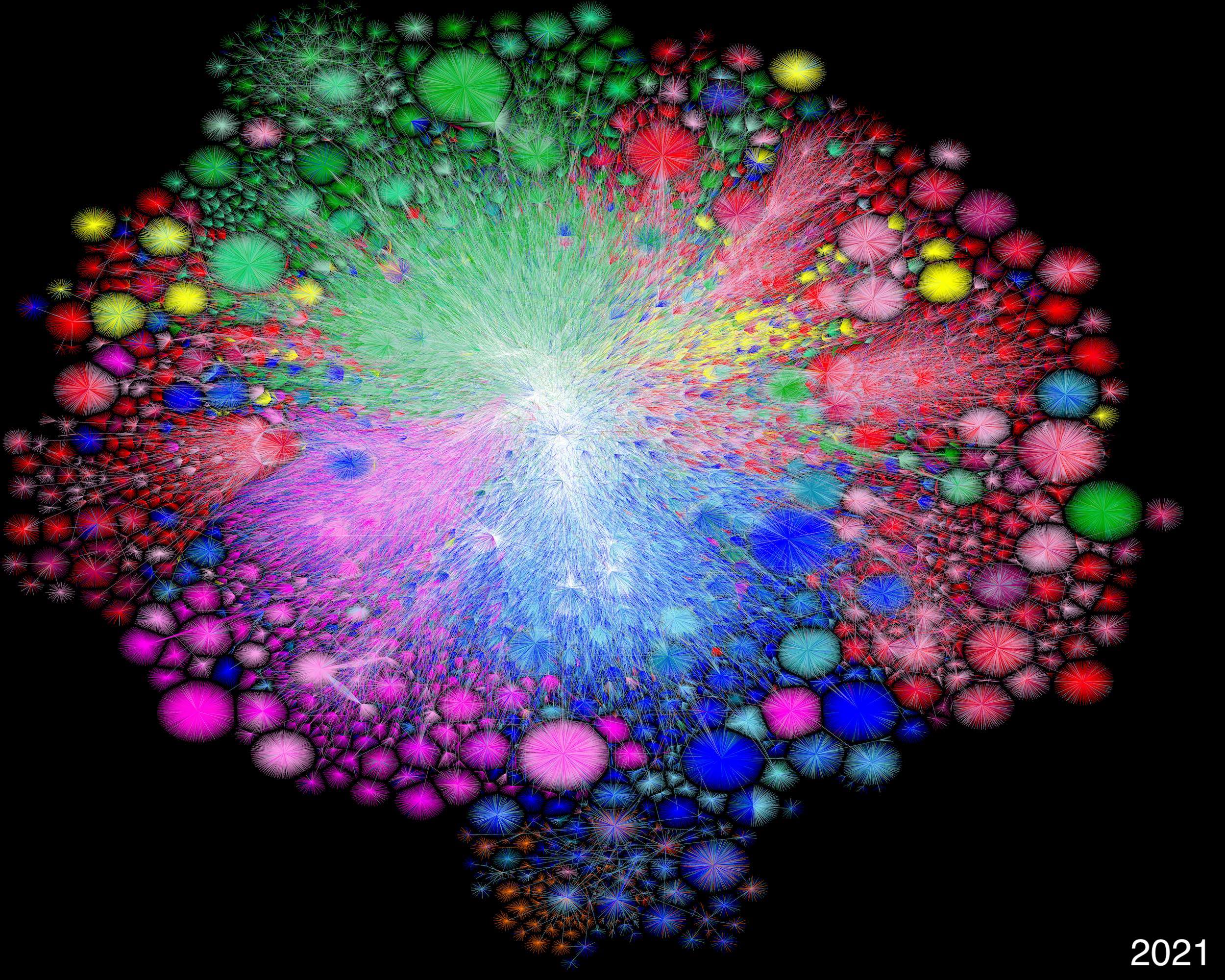

What distinguishes the Public Core from the Backbone of the NSFNET days is its lack of central control. The modern Internet has no "backbone": no single network carries more than a small fraction of the total network traffic, and while ICANN remains in control of the root zone, top-level domains are managed by separate organisations (as of writing, the 1,517 TLDs in the root zone are managed by 792 different registry operators), while IP addresses are managed by five different Regional Internet Registries.

Therefore, the Public Core consists of a diverse range of organisations, including for-profit companies, non-profits, charities, academic institutions and government agencies, spread out all over the world.

The GCSC breaks these organisations down into four different functions:

1. Packet routing and forwarding

Naming and numbering systems include systems and information used in the operation of the Internet's Domain Name System, including registries, name servers, zone content, infrastructure and processes such as DNSSEC used to cryptographically sign records, and the whois information services for the root zone, inverse-address hierarchy, country-code, geographic, and internationalized top level domains and for new generic and non-military generic top-level domains. It includes frequently used public recursive DNS resolvers. It includes the systems of the Internet Assigned Numbers Authority and the Regional Internet Registries which make available and maintain the unique allocation of Internet Protocol addresses, Autonomous System Numbers, and Internet Protocol Identifiers. It also includes the naming and numbering protocols themselves and the integrity of the standardization processes and outcomes for protocol development and maintenance.

2. Naming and numbering systems

Packet routing and forwarding includes the equipment, facilities, information, protocols, and systems which facilitate the transmission of packetized communications from their sources to their destinations. This includes Internet Exchange Points (the physical sites where Internet bandwidth is produced) and the peering and core routers of major networks which transport that bandwidth to users. It includes systems needed to assure routing authenticity and defend the network from abusive behavior. It includes the design, production, and supply-chain of equipment used for the above purposes. It also includes the integrity of the routing protocols themselves and their development, standardization, and maintenance processes.

3. Cryptographic mechanisms of security and identity

The cryptographic mechanisms of security and identity include the cryptographic keys which are used to authenticate users and devices and secure Internet transactions, and the equipment, facilities, information, protocols, and systems which enable the production, communication, use, and deprecation of those keys. This includes PGP keyservers, Certificate Authorities and their Public Key Infrastructure, DANE and its supporting protocols and infrastructure, certificate revocation mechanisms and transparency logs, password managers, and roaming access authenticators. It also includes the integrity of the standardization processes and outcomes for cryptographic algorithm and protocol development and maintenance and the design, production, and supply-chain of equipment used to implement cryptographic processes.

4. Physical transmission media

Physical transmission media includes physical cable systems and installations for wired communications serving the public, whether fiber or copper. This includes terrestrial and undersea cables and the landing stations, datacenters, and other physical facilities which support them. It includes the support systems for transmission, signal regeneration, branching, multiplexing, and signal-to-noise discrimination. It is understood to include cable systems that serve regions or populations, but not those that serve the customers of individual companies.

Are you in the "Public Core"?

Operators of top-level domains are a key part of the Public Core of the Internet. As the existence of the GCSC's Call to Protect the Public Core of the Internet demonstrates, TLD operators are in the firing line from a variety of security threats, from a wide range of hostile actors.

Organisations that are part of the Public Core must therefore prioritise the security and stability of their operations, and integrate this into every aspect of their business. Working with skilled technology partners - such as CentralNic - is increasingly becoming the best way to mitigate the security and stability risks faced by gTLD and ccTLD registry operators.

CentralNic's registry platform already supports over 100 top-level domains including both generic and country-code TLDs, and is managed through a comprehensive security and business continuity management system certified against ISO 27001 (information security) and ISO 22301 (business continuity). CentralNic is recognised as an Operator of Essential Services (OES) in the UK and EU under the Network Information Security (NIS) Directive.